I build & operate

production systems

at scale.

Systems Engineer & Founder.

Based in Los Angeles.

Keeping hundreds of environments running.

Engineer building

systems that

run themselves.

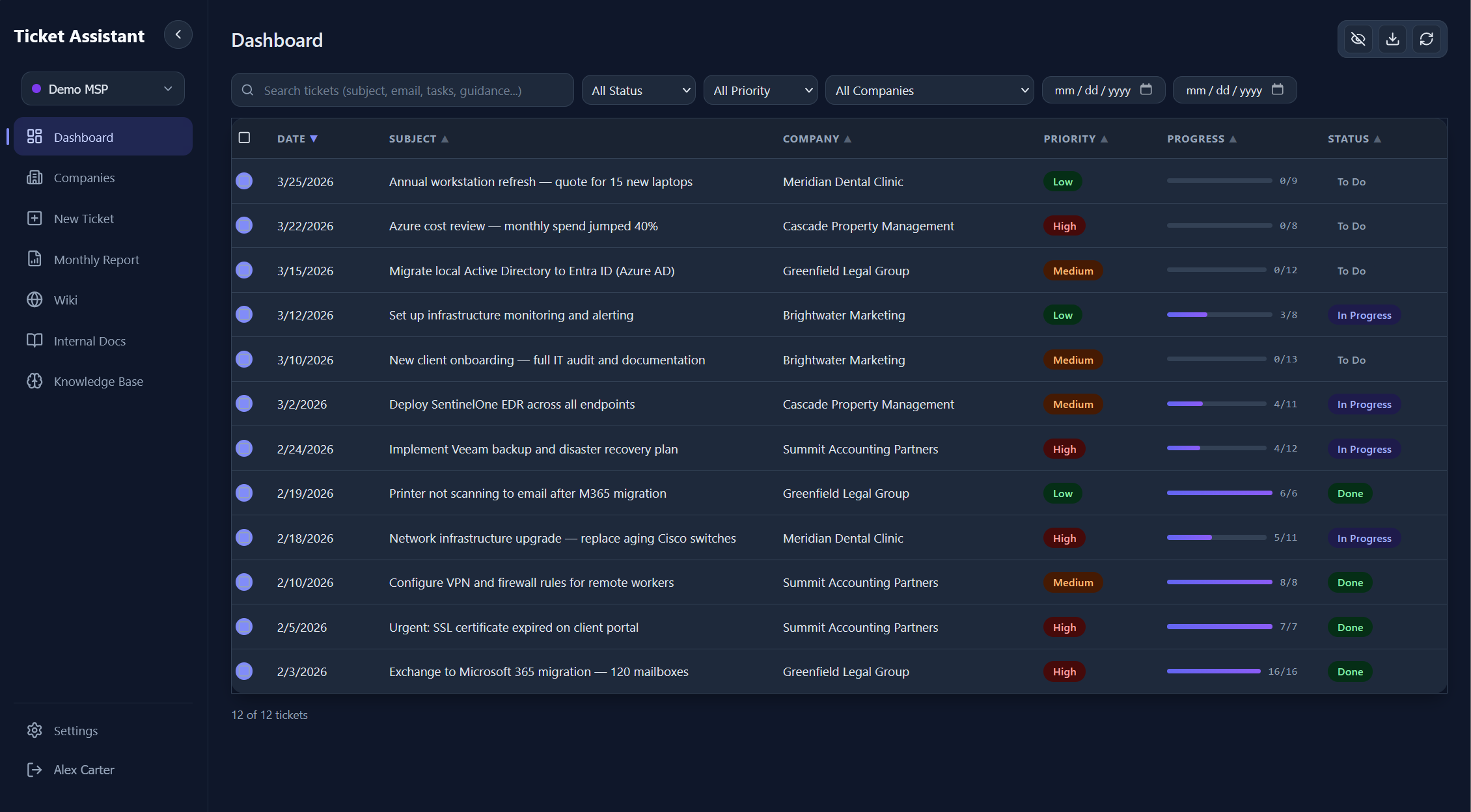

I work on production infrastructure and applications that support real users at scale. That includes everything from cloud environments and DNS to backend services, automation pipelines, and legacy systems that need to stay online no matter what.

Most of my experience comes from operating live systems, not just building them. Debugging broken payment flows, tracing down infrastructure issues, and keeping hundreds of environments stable has shaped how I approach engineering: keep it simple, make it reliable, and remove as many failure points as possible.

I tend to focus on turning messy, manual processes into clean, repeatable systems. Whether it's internal tools, data pipelines, or full application workflows, the goal is always the same: make it predictable, scalable, and low maintenance.

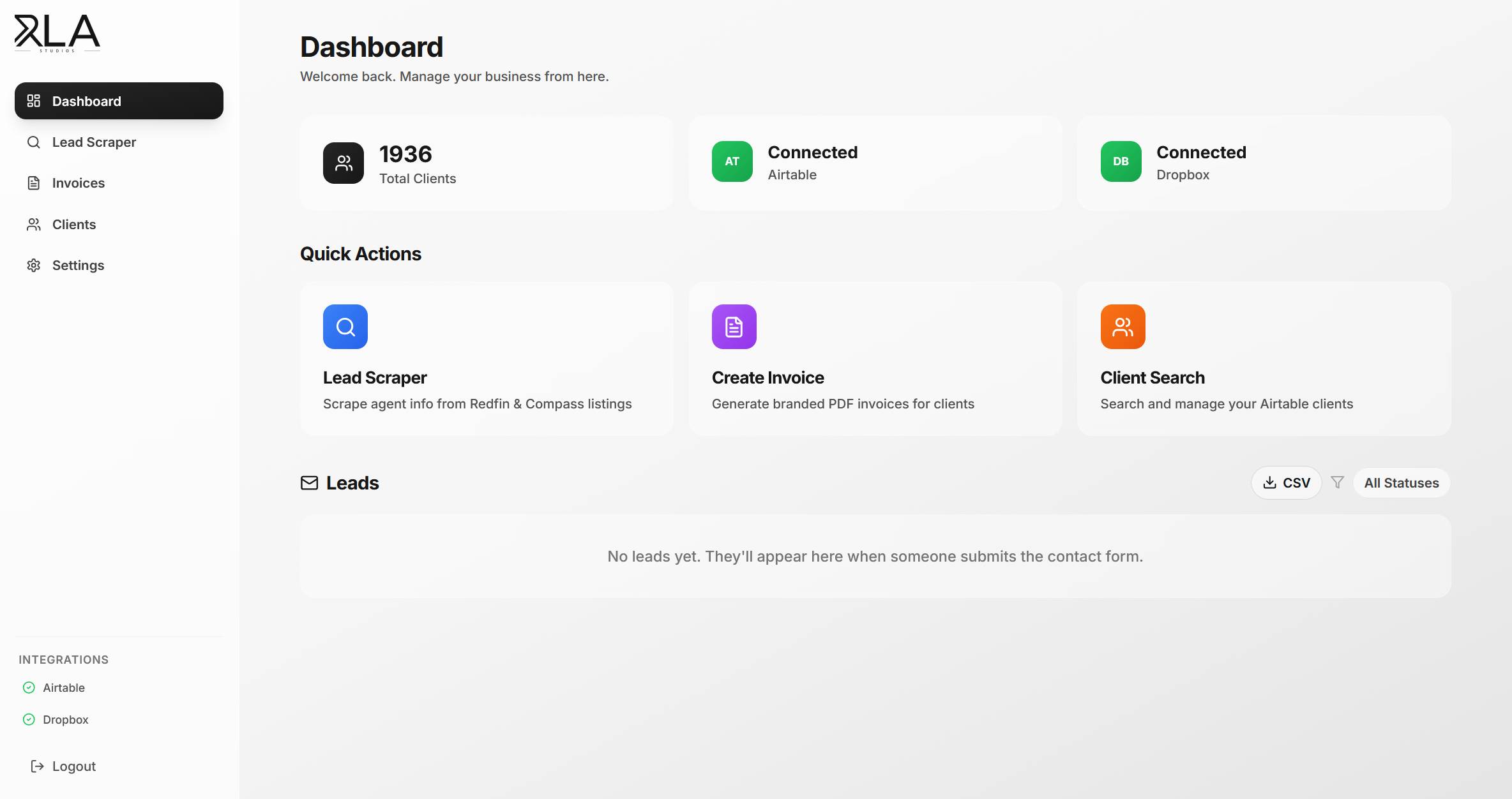

RLA Studios came out of that same mindset. What started as creative work evolved into building systems behind it, automating everything from client intake to delivery so it can scale without becoming operational overhead.

I'm less interested in perfect architecture diagrams and more in systems that actually hold up in production, under load, with real users.

Outside of work, I'm usually watching tennis or F1, which probably explains why I care a bit too much about performance, consistency, and things working exactly the way they should.